Generative AI is revolutionizing the field of artificial intelligence, transforming how machines interact with humans—from producing realistic images and videos to generating human-like text. However, achieving consistent accuracy and minimizing bias requires more than innovation alone. It demands high-quality data, thorough evaluation, precise fine-tuning, and continuous model refinement through Reinforcement Learning from Human Feedback (RLHF).

At Neurotech AI, we specialize in strengthening generative AI models through precise data annotation and comprehensive model evaluation. Our approach ensures that models are not only cutting-edge but also dependable, scalable, and ready for real-world applications.

We specialize in curating and refining data to improve the accuracy and performance of LLMs. Our expert teams perform in-depth audits and rigorous quality checks on generative AI outputs, ensuring consistently high standards.

Our solutions are designed to optimize SLMs for domain-specific use cases, boosting efficiency and performance—especially in resource-constrained environments like mobile devices and edge computing systems.

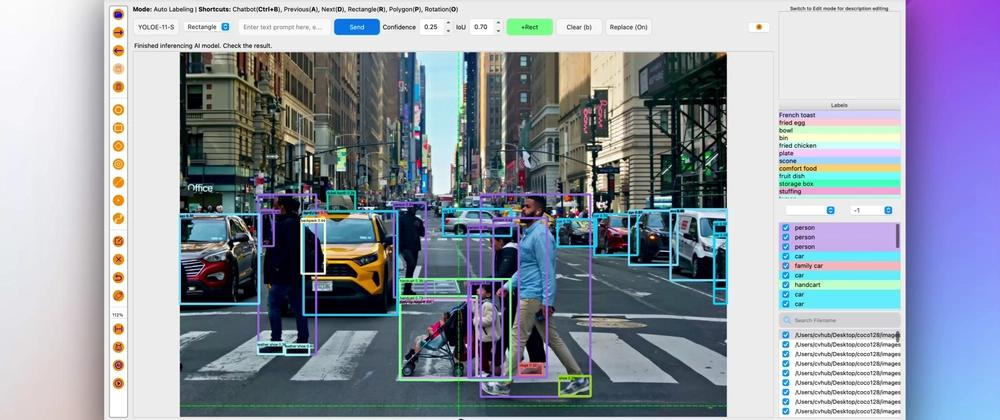

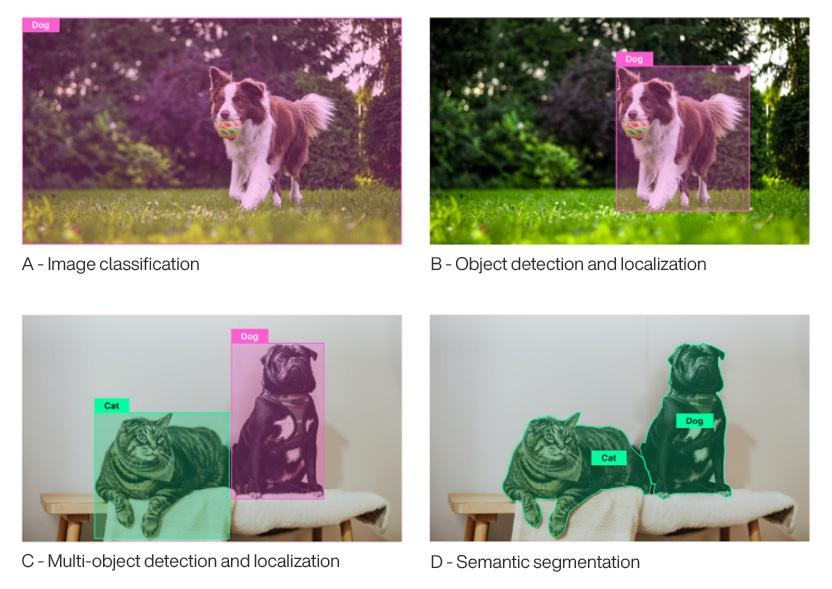

Our team enhances image segmentation processes to detect edge cases, improve data quality, and optimize model deployment. This enables more reliable, adaptable, and precise visual intelligence for critical applications such as medical imaging and autonomous driving.

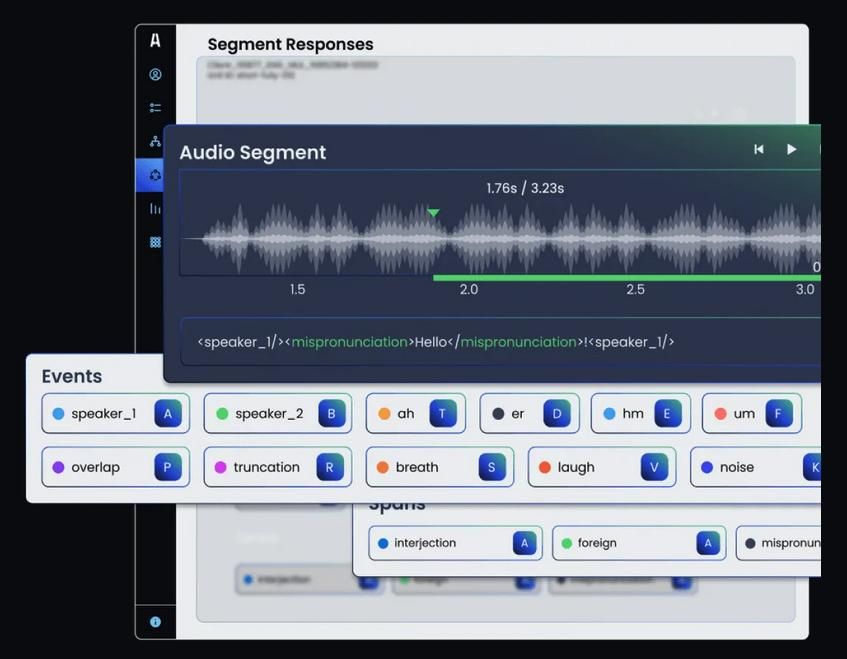

We curate diverse datasets across text, image, and audio to refine multimodal models for higher accuracy. By leveraging RLHF, we minimize harmful or biased outputs, tailor models for specific markets, and ensure reliable, misinformation-free performance across all modalities.

In this workflow, human annotators review multiple AI-generated responses and rank or score them based on quality, accuracy, and safety. These rankings are then used to fine-tune the model, ensuring outputs are more aligned with human expectations and ethical standards.

The image represents adversarial testing where experts input challenging or harmful prompts to identify model weaknesses. Responses are flagged, categorized, and analyzed to improve robustness, safety, and compliance.

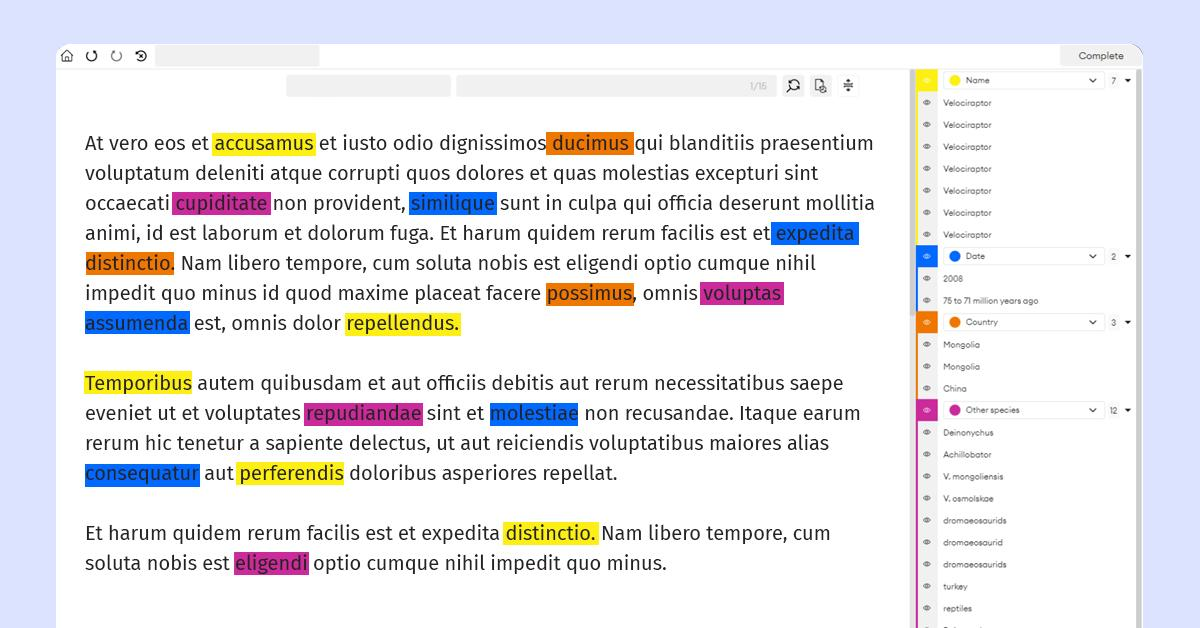

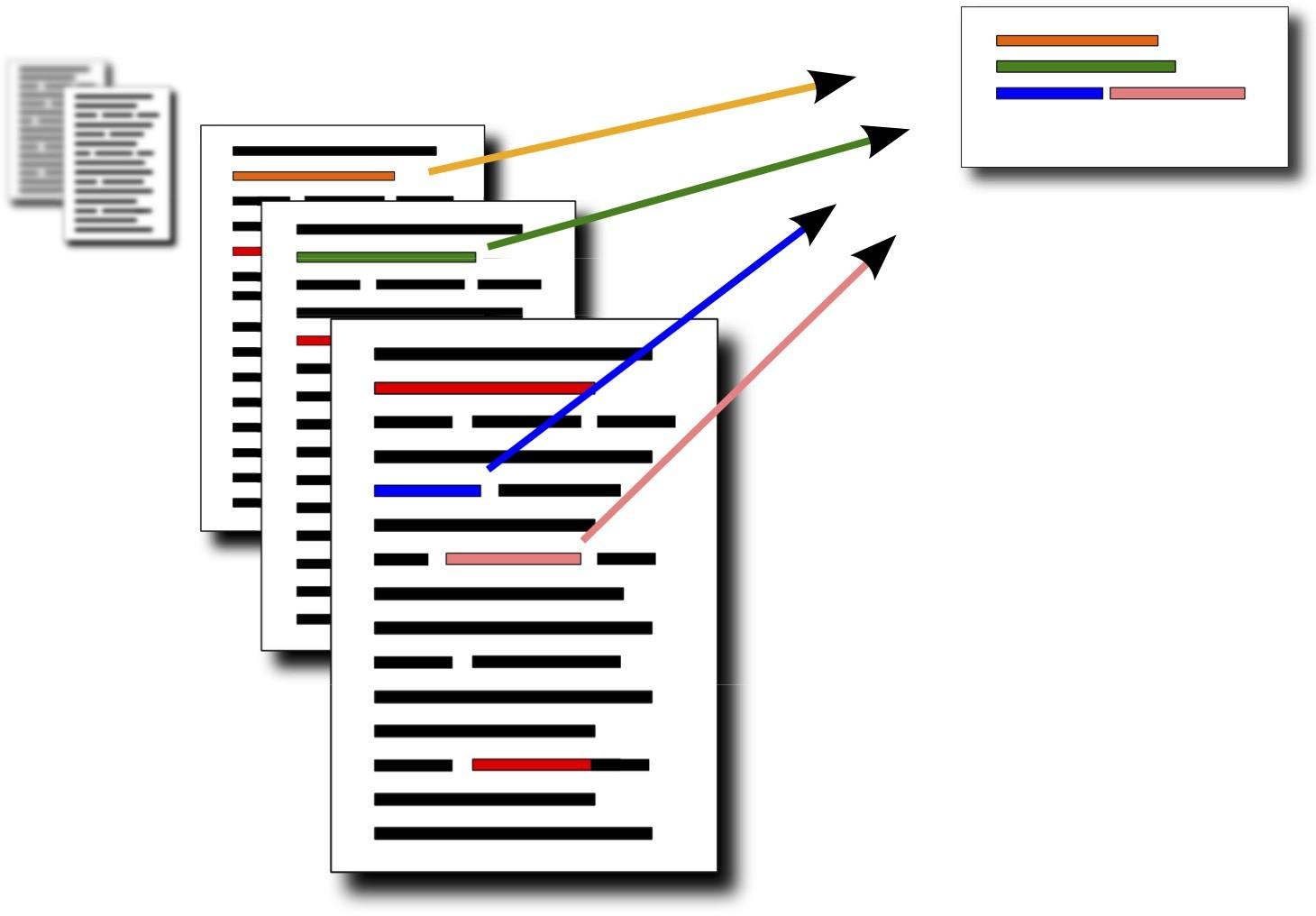

Annotators highlight key sentences or sections from long documents to create concise summaries. This helps train AI models to extract essential information and generate accurate, context-aware summaries.

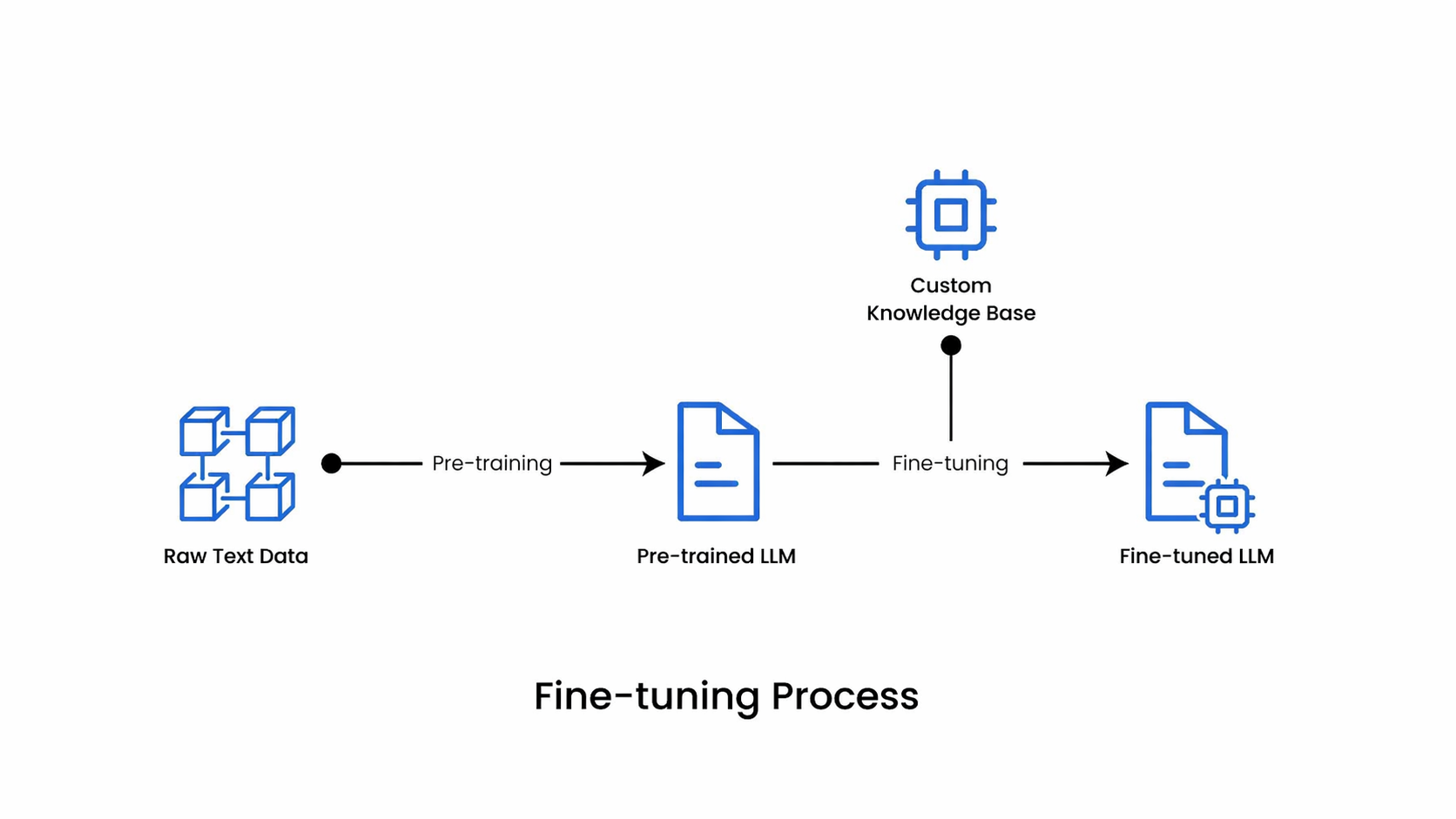

The visual shows how a pre-trained model is refined using domain-specific labeled data. Adjustments improve accuracy and performance for targeted applications such as healthcare, finance, or customer support.

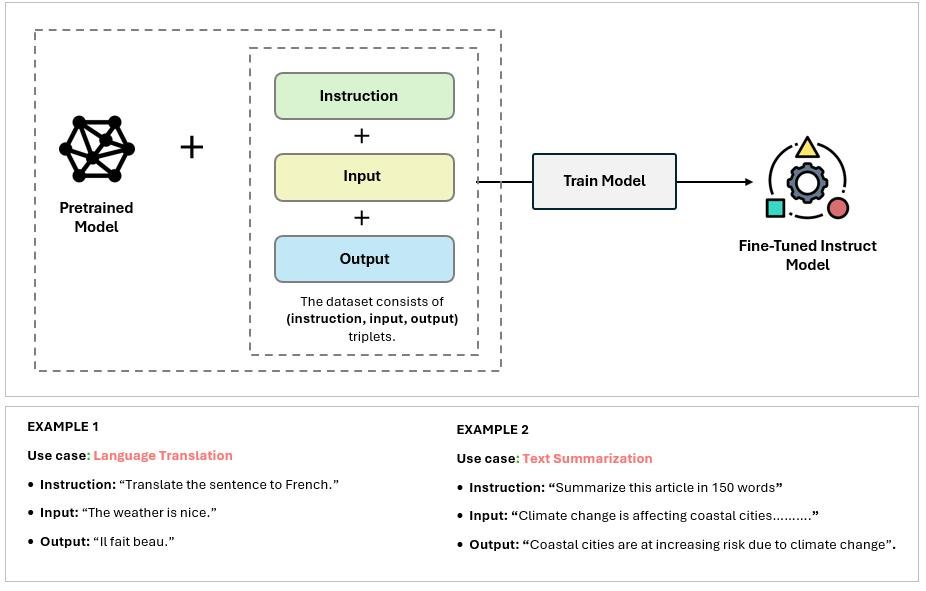

This shows curated prompt-response pairs where high-quality answers are mapped to inputs. These datasets train models to generate relevant, accurate, and context-aware responses.

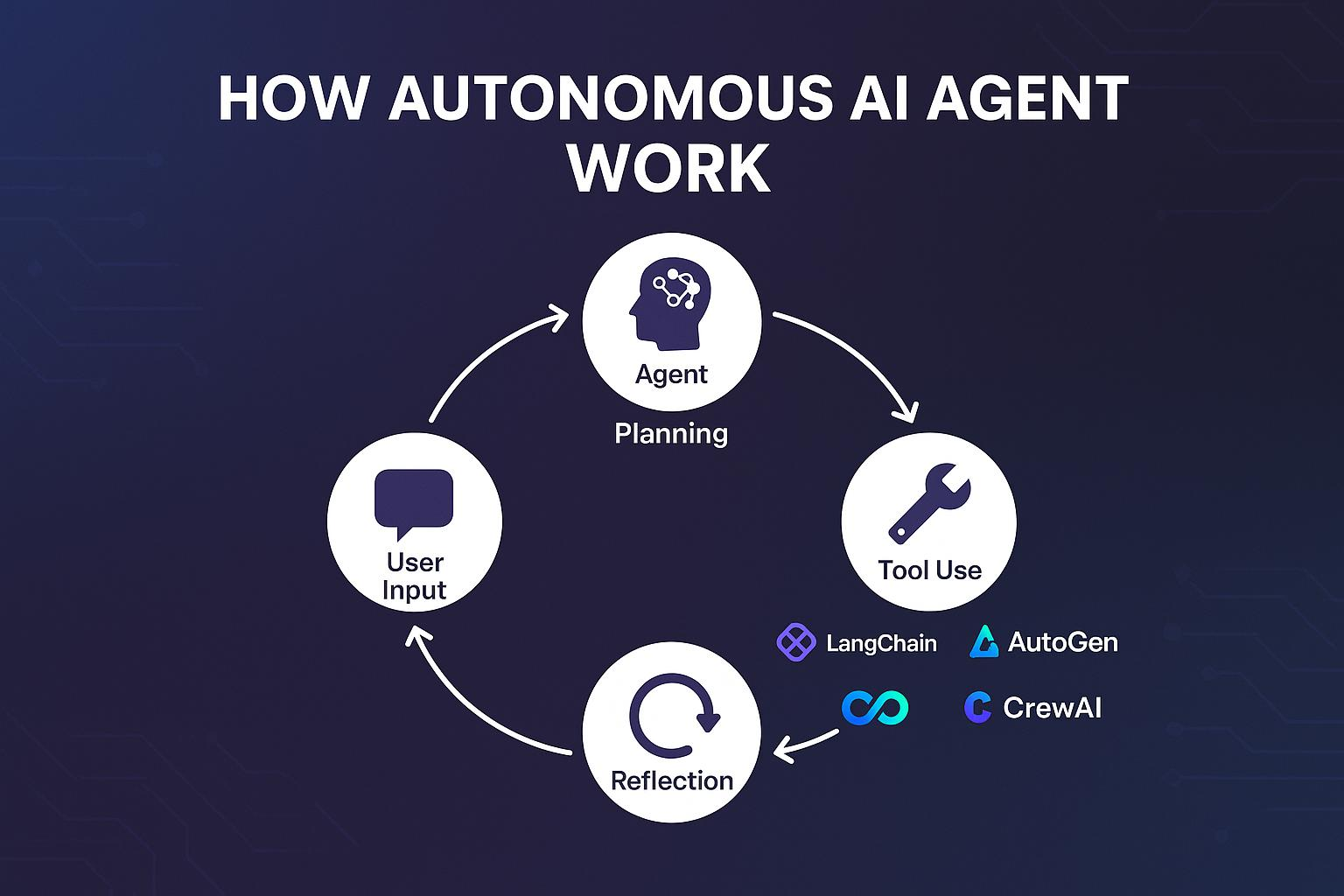

The image illustrates multi-step workflows where AI plans, executes, and refines actions autonomously. Annotation focuses on decision paths, task sequences, and outcomes for intelligent automation.

Reliable, secure, and scalable AI data solutions tailored to your needs

High-quality, accurate datasets tailored to your specific AI application needs.

End-to-end data protection with strict workflows ensuring complete confidentiality.

Efficient handling of projects at any scale without compromising speed or quality.

Pay-as-you-go pricing model designed to fit your budget and project scope.